Written for Knowledge Exchange by Andrea Chiarelli (Research Consulting)

A recent report by the European Commission called "on all European Member States and other relevant actors from the public and private sectors to help co-create, develop and maintain a ‘Research System based on shared knowledge' by 2030". The report frames such a system as the next stage in the change process initiated by the open science movement, and we would argue that the reproducibility of research will play an important role in this context.

Reproducibility is a core element of open scholarship and, more broadly, it enhances the trust and reliability of scientific findings. In the context of this blog, we tentatively define reproducibility as cases where researchers use the data and procedures (e.g. the same code) shared by others to obtain the same results as in the original study. Past studies have shown that reproducibility remains a significant issue in today's research enterprise, partly due to its implications across all stages of the research lifecycle, from discovery and research planning to publication and dissemination.

This last step, the publication and dissemination of reproducible research outputs, is currently being investigated by Knowledge Exchange (KE), a partnership of national organisations within Europe tasked with developing infrastructure and digital services to improve research and higher education.

The "Publishing Reproducible Research Output" activity at KE

The KE activity on "Publishing Reproducible Research Output" is being led by a group of international experts, with support from Research Consulting and academic partners from the universities of Hasselt and Oxford.

By investigating current practices and barriers in the publication of reproducible research outputs, this KE activity seeks to determine how technical and social infrastructures can support future developments. As part of this work, we will conduct an online survey, focus groups and interviews focusing on key issues faced by researchers, funders, and infrastructure providers.

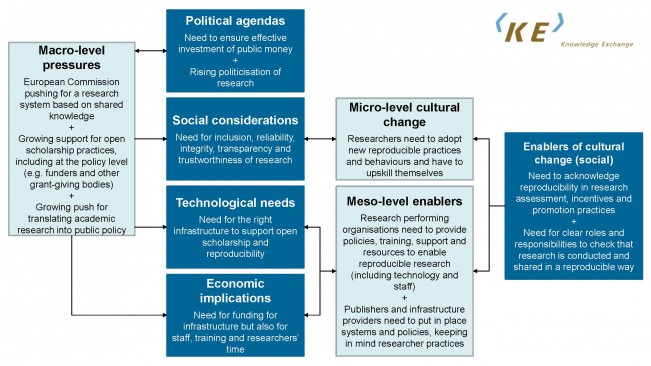

We are using the KE Open Scholarship Framework as a lens to analyse the reproducibility landscape,considering a combination of levels (micro-, meso and macro-level), arenas (political,economic, social, technical) and research phases (discovery, planning, project phase, dissemination). Figure 1 summarises the complex interplay of actors, issues and recent developments when it comes to reproducibility. Particularly, it conveys how the expectations of funders and policy makers(macro level) are driving concerns in different arenas, which in turn have an impact on researchers (micro level), institutions and infrastructure providers (meso level).

As our activity begins to take shape, we think that three key considerations will deserve particular attention.

#1 There is no shared understanding of research reproducibility

In 2012, the scientific discourse started including reports of a "replication crisis",which is sometimes also referred to as a "reproducibility" or "replicability"crisis. The replication crisis revolved around the fact that researchers collecting new data could not come to the same results as the original authors,and one aspect of this problem relates to the reproducibility of research outputs. If data and procedures (e.g. code) of the original study are not documented and published, research results can neither be reproduced nor replicated.

The above clearly conveys the complexity of this area,and the confusion (and potential overlap) around terminology certainly complicates discussions. Focusing on the publishing and dissemination phase of research, we note that an influential article estimated that over 50% of studies are never published in full: biased or incomplete reporting where researchers publish positive results and leave negative results unpublished is currently recognised as one of the most damaging problems in science.

Although the above concerns are perceived as individual responsibilities of researchers, systemic issues linked to demands and incentives are most often found as being the culprit, which leads to our second point.

#2 The "publish or perish" culture is not conducive to reproducible research

There is growing criticism towards current customs in academic publishing (see Figure 2), but changing research cultures is an extremely complex matter.

Ongoing discussions around the so-called "publish or perish" culture, where by scholars who publish infrequently may lose ground in competition for available academic roles, tend to focus on the following elements:

- the lack of rewards for practices that enhance the quality and reproducibility of research;

- an over-reliance on inadequate metrics;

- a focus on productivity and outputs; and

- excessive competition in academia.

Unsurprisingly,all of these lead to significant pressures on individual researchers, which in turn can underpin poor data sharing practices and highly affect how and where academics publish their findings. In addition, the "publish or perish" culture has led high-profile journals to becoming more selective in the face of increasing submissions and to favouring results that appear impressive or ground-breaking.

Although scholarly journals are the most prominent pathway to disseminate research, let's not forget that they aren't the only infrastructure that can support reproducibility, nor are they the only mean to shift scholarly collaboration and communication practices.

#3 We need to acknowledge the diversity of solutions for reproducible research

A recent Jisc study discussed the wide range of practical considerations and technical solutions to enable the publication of reproducible research outputs. For example, the booming popularity of preprint servers has been enhancing the speed and transparency of the scientific discourse, including in the context of COVID-19. In addition, reporting checklists and data and code availability statements became part of the publishing requirements of several journals, alongside broader open science and open data policies.

The use of reproducibility badging, has also been recently proposed by the National Information Standards Organisation (NISO) for Computational and Computing Sciences, and we note that the use of badges by journals has been found to have a positive impact on researchers' behaviours.

In view of these activities and developments, we believe that things are moving in the right direction. However, there are still a lot of open questions whenit comes to connecting reproducibility issues with culture change and available technical solutions to help researchers to create more reproducible research outcomes: we look forward to investigating this evolving landscape over the course of 2021!

Get in touch

We would welcome any opportunities to engage the reproducible research community (including individual researchers and representatives of universities, service providers and research funders). Feel free to share any suggestions or thoughts on our work on Twitter at @knowexchange or @rschconsulting or via email at juliane.kant@dfg.de or andrea.chiarelli@research-consulting.com.